Applying image pre-Processing and post - processing to ocr: A case study for vietnamese business cards

This paper presents a proposal image pre-processing and Vietnamese post-processing

algorithms efficiently adopt the Tesseract open source Optical Character Recognition (OCR)

library. We built a mobile application (Android) and applied the result for Vietnamese

business cards. The experimental results show that the proposed method implemented as an

Android application achieved more accuracy than the original OCR library.

Trang 1

Trang 2

Trang 3

Trang 4

Trang 5

Trang 6

Trang 7

Trang 8

Trang 9

Trang 10

Tải về để xem bản đầy đủ

Bạn đang xem 10 trang mẫu của tài liệu "Applying image pre-Processing and post - processing to ocr: A case study for vietnamese business cards", để tải tài liệu gốc về máy hãy click vào nút Download ở trên

Tóm tắt nội dung tài liệu: Applying image pre-Processing and post - processing to ocr: A case study for vietnamese business cards

KỶ YẾU HỘI NGHỊ KHOA HỌC THƯỜNG NIÊN TRƯỜNG ĐẠI HỌC ĐÀ LẠT NĂM 2018

90

APPLYING IMAGE PRE-PROCESSING AND POST-PROCESSING

TO OCR: A CASE STUDY FOR VIETNAMESE BUSINESS CARDS

Thai Duy Quya*, Vo Phương Binha, Tran Nhat Quanga, Phan Thi Thanh Ngaa

aThe Faculty of Information Technology, Dalat University, Lamdong, Vietnam

*Corresponding author: Email: quytd@dlu.edu.vn

Abstract

This paper presents a proposal image pre-processing and Vietnamese post-processing

algorithms efficiently adopt the Tesseract open source Optical Character Recognition (OCR)

library. We built a mobile application (Android) and applied the result for Vietnamese

business cards. The experimental results show that the proposed method implemented as an

Android application achieved more accuracy than the original OCR library.

Keywords: Android; OCR; Image pre-processing; Post-processing; Vietnamese Business

Card.

KỶ YẾU HỘI NGHỊ KHOA HỌC THƯỜNG NIÊN TRƯỜNG ĐẠI HỌC ĐÀ LẠT NĂM 2018

91

ỨNG DỤNG TIỀN XỬ LÝ ẢNH VÀ HẬU XỬ LÝ TRONG QUÁ

TRÌNH NHẬN DẠNG CHỮ QUANG HỌC:

NGHIÊN CỨU ÁP DỤNG CHO DANH THIẾP TIẾNG VIỆT

Thái Duy Quýa*, Võ Phương Bìnha, Trần Nhật Quanga, Phan Thị Thanh Ngaa

aKhoa Công nghệ Thông tin, Trường Đại học Đà Lạt, Lâm Đồng, Việt Nam

*Tác giả liên hệ: Email: quytd@dlu.edu.vn

Tóm tắt

Bài báo trình bày đề xuất phương pháp tiền xử lý ảnh và hậu xử lý tiếng Việt áp dụng cho

quá trình nhận dạng ký tự quang học bằng thư viện mã nguồn mở Tesseract. Chúng tôi xây

dựng một ứng dụng trên hệ điều hành Android và áp dụng kết quả nghiên cứu cho các danh

thiếp tiếng Việt. Kết quả cho thấy phương pháp đề xuất khi thực thi cho kết quả chính xác

hơn các ứng dụng hiện hành.

Từ khoá: Android; Danh thiếp tiếng Việt; Hậu xử lý; Nhận dạng ký tự quang học; Tiền xử

lý ảnh.

KỶ YẾU HỘI NGHỊ KHOA HỌC THƯỜNG NIÊN TRƯỜNG ĐẠI HỌC ĐÀ LẠT NĂM 2018

92

1. INTRODUCTION

In daily work, we usually receive business cards from our friends or partners. The

business cards regularly have some information, such as name, address, phone number,

etc. In the contact list of a smartphone, the user can also store the same contact

information as a business card. Therefore, our goal is to build an application to extract

the text of the business card and save the contact information into a smart phone. The

Android application can directly input an image of the contact information using the

phone’s camera. Noise in the business card image is then eliminated. The image is then

provided to the Optical Character Recognition (OCR) engine to extract the necessary

information and to save it to the contact list. To improve the efficiency of the extraction

process, we developed improved algorithms for image pre-processing and post-

processing. Our application is implemented on an Android device and tested with

Vietnamese business cards. The OCR engine used in this paper is the Tesseract open

source library.

2. RELATED WORK

OCR systems have been under development in research and industry since the

1950s using knowledge-based and statistical pattern recognition techniques to transform

scanned or photographed images of text into machine-editable text files (Eason, Noble,

& Sneddon, 1955). Shalin, Chopra, Ghadge, and Onkar (2014) developed an early OCR

system. Techniques of pre-processing images, used as an initial step in character

recognition systems, were presented, of which the feature extraction step of optical

character recognition is the most important. In order to improve the accuracy of image

recognition, Mande and Hansheng (2015) and Matteo, Ratko, Matija, and Tihomir (2017)

have proposed an efficient method to remove background noise and enhance low-quality

images, respectively. In addition, Nirmala and Nagabhushan (2009) proposed an

approach which can handle document images with varying backgrounds of multiple

colors. Bhaskar, Lavassar, and Green (2015); Pal, Rajani, Poojary, and Prasad (2017);

and Yorozu, Hirano, Oka, and Tagawa (1987) presented a tutorial to improve the accuracy

of the OCR method when converting printed words into digital text.

Although there are many applications of OCR which were high accurate for the

English language (Badla, 2014; Chang, & Steven, 2009; Kulkarni, Jadhav, Kalpe, &

Kurkut, 2014; Palan, Bhatt, Mehta, Shavdia, & Kambli, 2014; Phan, Nguyen, Nguyen,

Thai, & Vo, 2017; & Trần, 2013), OCR systems for non-English languages may have

several problems. Vietnamese is a language with tones and single syllables (Phan & et

al., 2017). We were not successful in finding any relevant studies that have a 100%

recognition rate for Vietnamese, but some applications have been implemented, such as

in Trần (2013). Among commercial versions, another popular application is CamCard,

but it does not offer much support for Vietnamese language business cards. An

application available for Vietnamese language in Google Store is Business Card Reader

Free, but the experimental accuracy is not high.

KỶ YẾU HỘI NGHỊ KHOA HỌC THƯỜNG NIÊN TRƯỜNG ĐẠI HỌC ĐÀ LẠT NĂM 2018

93

3. OCR AND TESSERACT

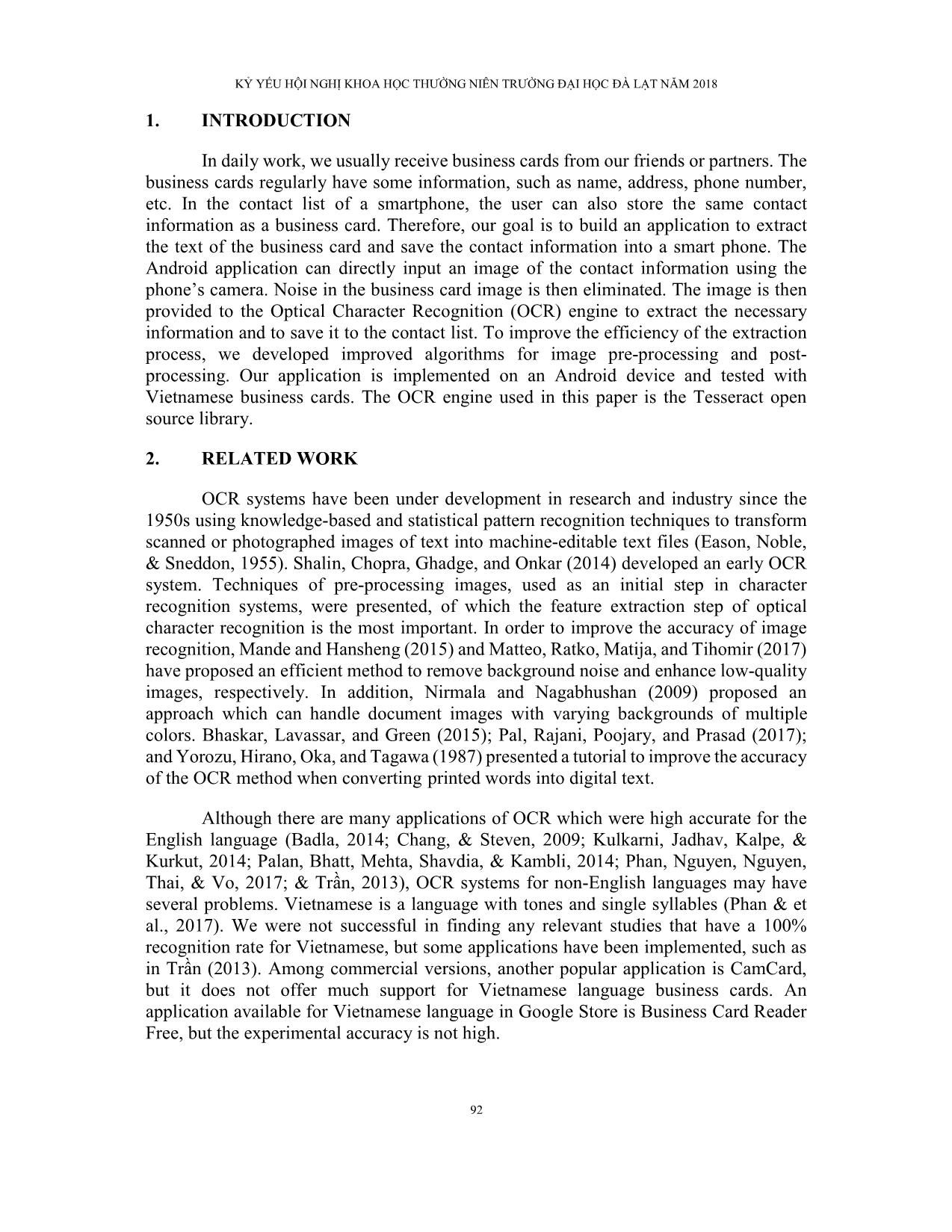

OCR is the technical process which converts scanned images, typewritten, or

printed text into machine encoded text. OCR has been in development for almost 80 years,

as the first patent for an OCR machine was filed in 1929 by a German named Gustav

Tauschek and an American patent was filed subsequently in 1935. OCR has many

applications, including use in the postal service, language translation, and digital libraries.

Currently, OCR is even in the hands of the general public in the form of mobile

applications. The OCR system input images include text which cannot be edited. The

output of the OCR process is editable text from the input images. The OCR process is

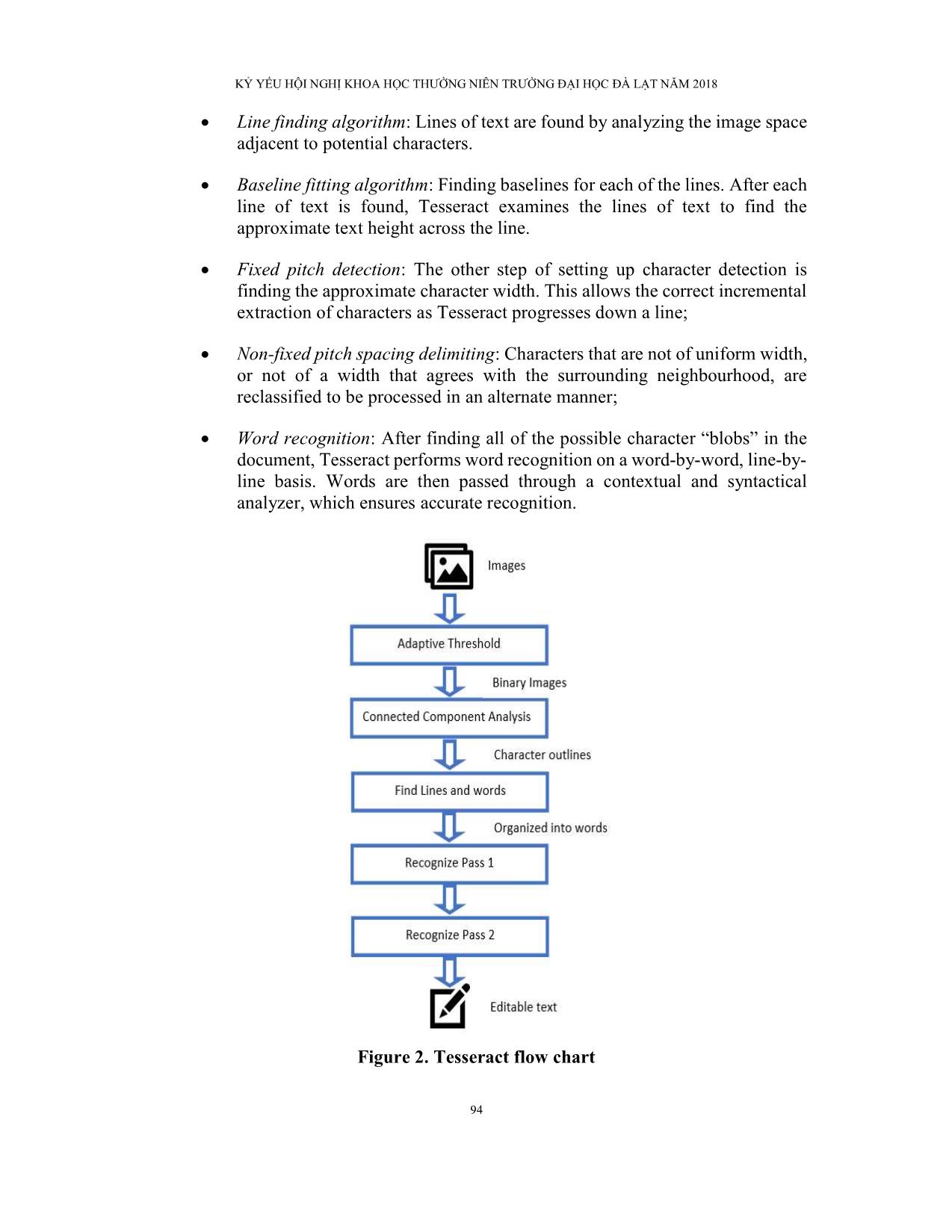

illustrated in Fig. 1.

Figure 1. OCR process

There are a few stages within the OCR process used to convert an image to text.

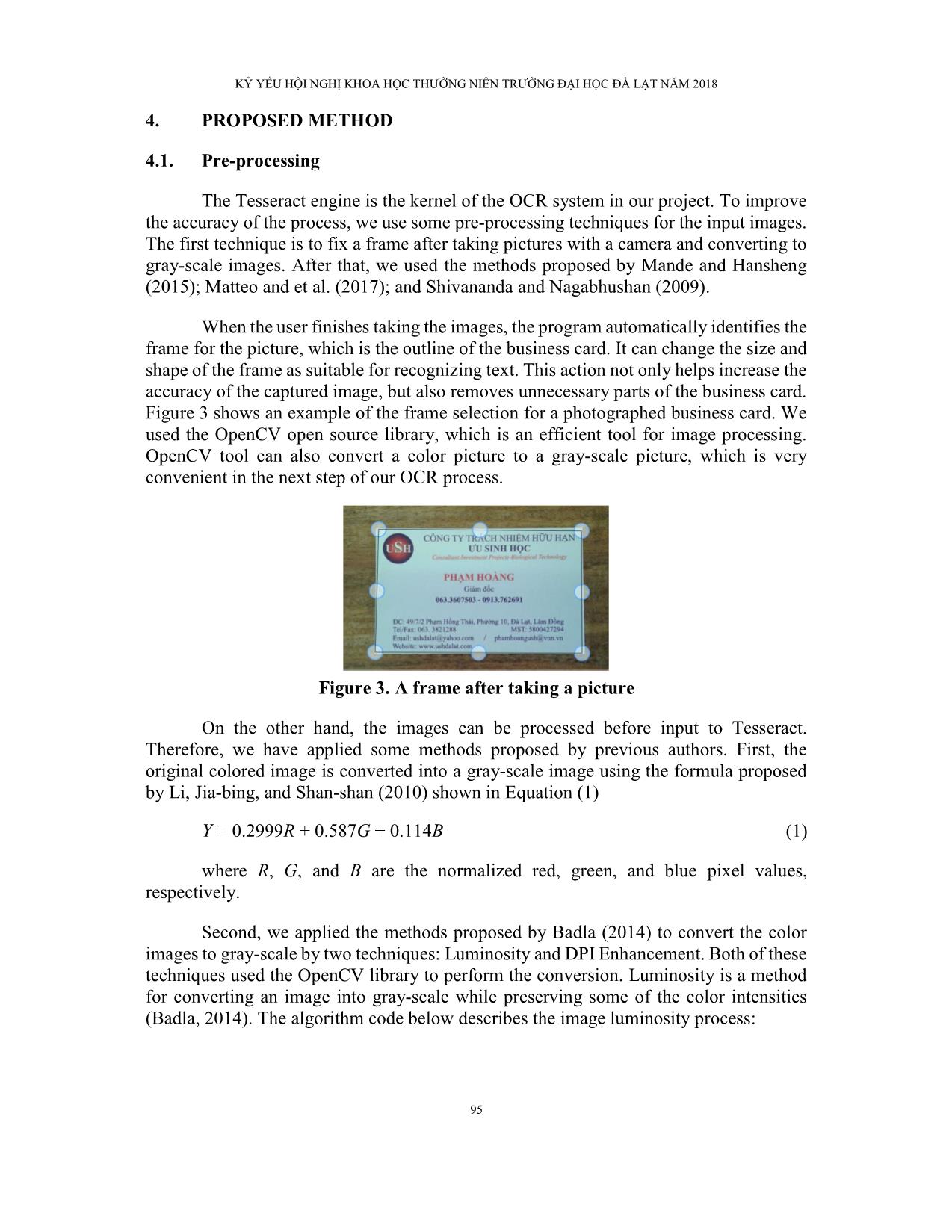

To simplify these steps, we use ... refore, we have applied some methods proposed by previous authors. First, the

original colored image is converted into a gray-scale image using the formula proposed

by Li, Jia-bing, and Shan-shan (2010) shown in Equation (1)

Y = 0.2999R + 0.587G + 0.114B (1)

where R, G, and B are the normalized red, green, and blue pixel values,

respectively.

Second, we applied the methods proposed by Badla (2014) to convert the color

images to gray-scale by two techniques: Luminosity and DPI Enhancement. Both of these

techniques used the OpenCV library to perform the conversion. Luminosity is a method

for converting an image into gray-scale while preserving some of the color intensities

(Badla, 2014). The algorithm code below describes the image luminosity process:

KỶ YẾU HỘI NGHỊ KHOA HỌC THƯỜNG NIÊN TRƯỜNG ĐẠI HỌC ĐÀ LẠT NĂM 2018

96

// Get buffered image from input file; iterate all the pixels in the image with width=w and height=h

for int w=0 to w=width

{

for int h=0 to h=height

{

// call BufferedImage.getRGB() saves the color of the pixel

// call Color(int) to grab the RGB value in pixel

Color= new color();

// now use red, green, and black components to calculator average.

int luminosity = (int)(0.2126 * red + 0.7152 *green + 0.0722 *blue;

// now create new values

Color lum = new ColorLum

Image.set(lum)

// set the pixel in the new formed object

}

}

To get the best results out of the image, we need to fix the DPI as 300 DPI is the

minimum acceptable for Tesseract (Badla, 2014). The algorithm for DPI enhancement is

as follows:

start edge extract (low, high){

// define edge

Edge edge;

// form image matrix

Int imgx[3][3]={}

Int imgy[3][3]={}

Img height;

Img width;

//Get diff in dpi on X edge

// get diff in dpi on y edge

diffx= height* width;

diffy=r_Height*r_Width;

img magnitude= sizeof(int)* r_Height*r_Width);

memset(diffx, 0, sizeof(int)* r_Height*r_Width);

memset(diffy, 0, sizeof(int)* r_Height*r_Width);

memset(mag, 0, sizeof(int)* r_Height*r_Width);

// this computes the angles

// and magnitude in input img

For ( int y=0 to y=height)

For (int x=0 to x=width)

Result_xside +=pixel*x[dy][dx];

Result_yside=pixel*y[dy][dx];

// return recreated image

result=new Image(edge, r_Height, r_Width)

return result;

}

Finally, we use the methods proposed by Mande and Hansheng (2015) and Matteo

& et al. (2017) with low-quality or background images. Tesseract requires a minimum

text size for reasonable accuracy. If the x-height of images is below 20px, the accuracy

drops off. The first pre-processing method proposed of Matteo and et al. (2017) is image

resizing so that the image height is 100px. Resizing is only applied if the height of the

original image is below 100px. The second pre-processing method of Matteo and et al.

(2017) is an image sharpening method. The main reason for using it is to enhance the

contrast between edges, i.e. to enhance contrast between text and background. The image

sharpening is achieved using unsharp masking, represented by Equation (2).

g(i,j) = f(i,j) - fsmooth(i, j) (2)

KỶ YẾU HỘI NGHỊ KHOA HỌC THƯỜNG NIÊN TRƯỜNG ĐẠI HỌC ĐÀ LẠT NĂM 2018

97

A smoothed image fsmooth is subtracted from the original image f. The third

proposed method of Matteo and et al. (2017) is image blurring to reduce high frequency

information and remove noise from the images, which can possibly cause a lower OCR

accuracy rate. This method is achieved by applying a low-pass filter to the analyzed image

f such that each pixel is replaced by the average of all the values in the local neighborhood

of size 9x9 pixels, as in Equation (3).

(3)

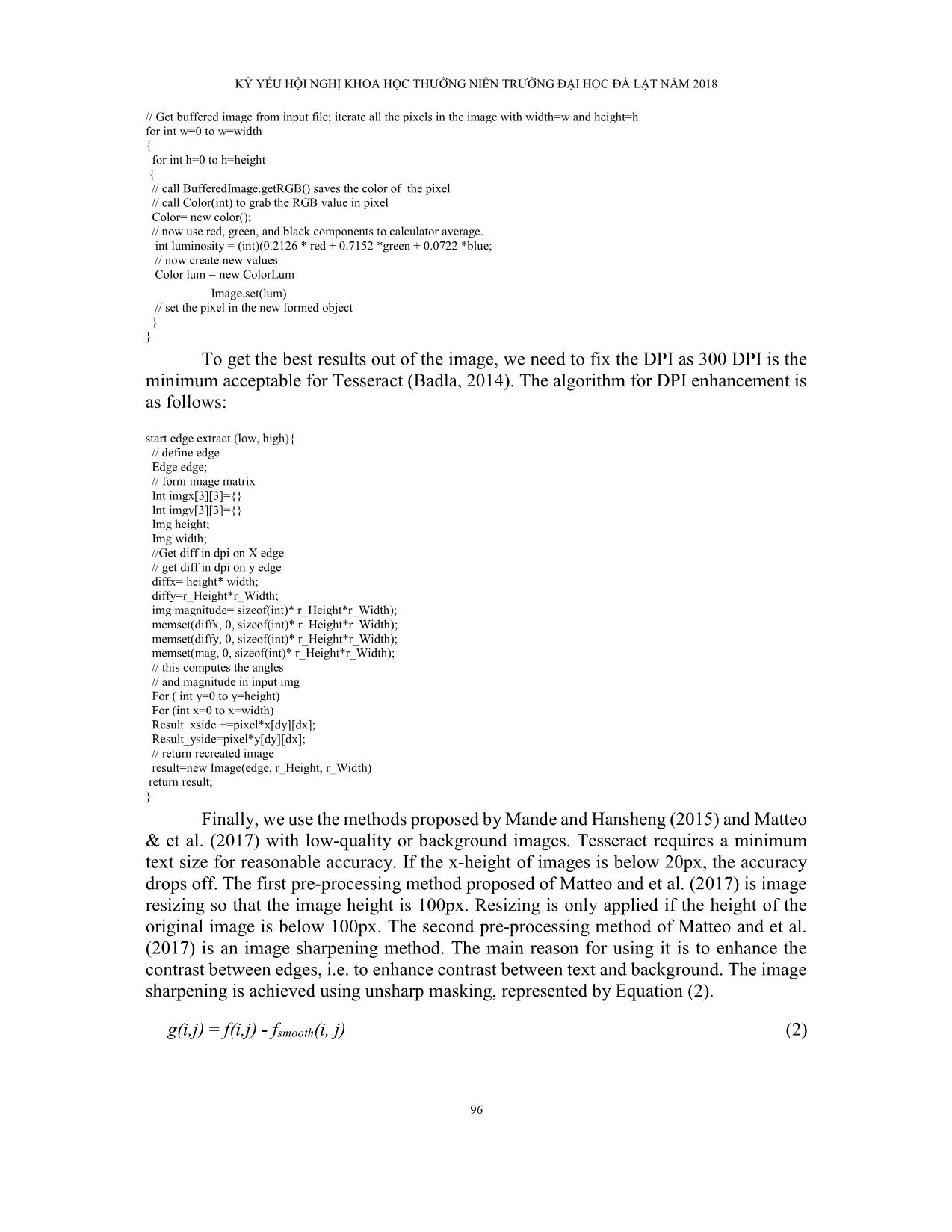

Mande and Hansheng (2015) proposed some methods in cases where the image

has a background. The methods are based on a color model in RGB space (Figure 4). We

applied this method using the parameter of brightness distortion (αi) and chromaticity

(CDi) to enhance a document image and make it easier to remove background. The

brightness distortion αi is obtained by Equation (4).

( i) = (pi - iEi)2 (4)

Where αi represents the pixel’s brightness. To minimize the object function (4), αi

must be 1 if the brightness of the given pixel in the current image is the same as in the

reference image. Similarly, αi < 1 means the pixel is dimmer than the expected brightness;

and αi >1 means it is brighter. When αi are determined, the value of CDi can be solved by

Equation (5):

CDi = || pi - iEi|| (5)

Figure 4. Color model in RGB space. Ei represents the expected color of pixel pi in

the current image. The difference between pi and Ei is decomposed into brightness

i and chromaticity (CDi)

Source: Mande and Hansheng (2015).

4.2. Post-Processing

OCR (including Tesseract) is used for many applications these days. In this

project, we only researched and applied OCR to business cards. Therefore, we were only

concerned with four items: i) Name or organization; ii) Telephone number; iii) Email;

and iv) Address of organization. Actually, there are two techniques for extracting textual

KỶ YẾU HỘI NGHỊ KHOA HỌC THƯỜNG NIÊN TRƯỜNG ĐẠI HỌC ĐÀ LẠT NĂM 2018

98

information from images: i) Regular expression (can own defined rules) or ii) Machine

learning statistics (Trần, 2013). In this study, we used regular expression, or methods

dependent on Vietnamese language rules, to obtain the necessary information.

The editable text received from the OCR process includes multiple lines. The

information on the business card usually is short and the first letters indicate the contents

of the line. Overall, the telephone number and email address use regular expressions,

whereas name and address are based on Vietnamese language conventions. For email

address and phone number, we used the regular expression provided by Kipalog (2018).

The regular expression for the email address is Expression (6):

/[A-Z0-9._%+-]+@[A-Z0-9-]+.+.[A-Z]{2,4}/igm (6)

Similarly, the phone number is expressied as Expression (7):

(\\(\\d+\\)+[\\s-.]*)*(\\d+[\\s-.]*)+ (7)

In addition, when the algorithm scans a phone number, it also categorizes the

number as a mobile number or a home number. On most business cards, the phone

numbers are usually a sequence of numbers, or are separated by special characters such

as white spaces, dots, dashes. Thus, in the algorithm we included some special

exceptions to improve the post-processing.

With the Vietnamese name, the algorithm will check whether the line contains the

family name or not. The family name is stored and a comparison is made to determine if

the information stream contains a family name. If not, the algorithm will get all the words

in the line and save them as the organization name. With address, the algorithm will check

if the input stream contains headings with such Vietnamese phrases as “Đc:” or “Địa chỉ:”

or English phrases such as "Add:", “Address:”, or these words in uppercase format. If it

exists, this line is the address, otherwise the algorithm checks to find the name of the

provinces in Vietnam, which are stored in a list similar to family name.

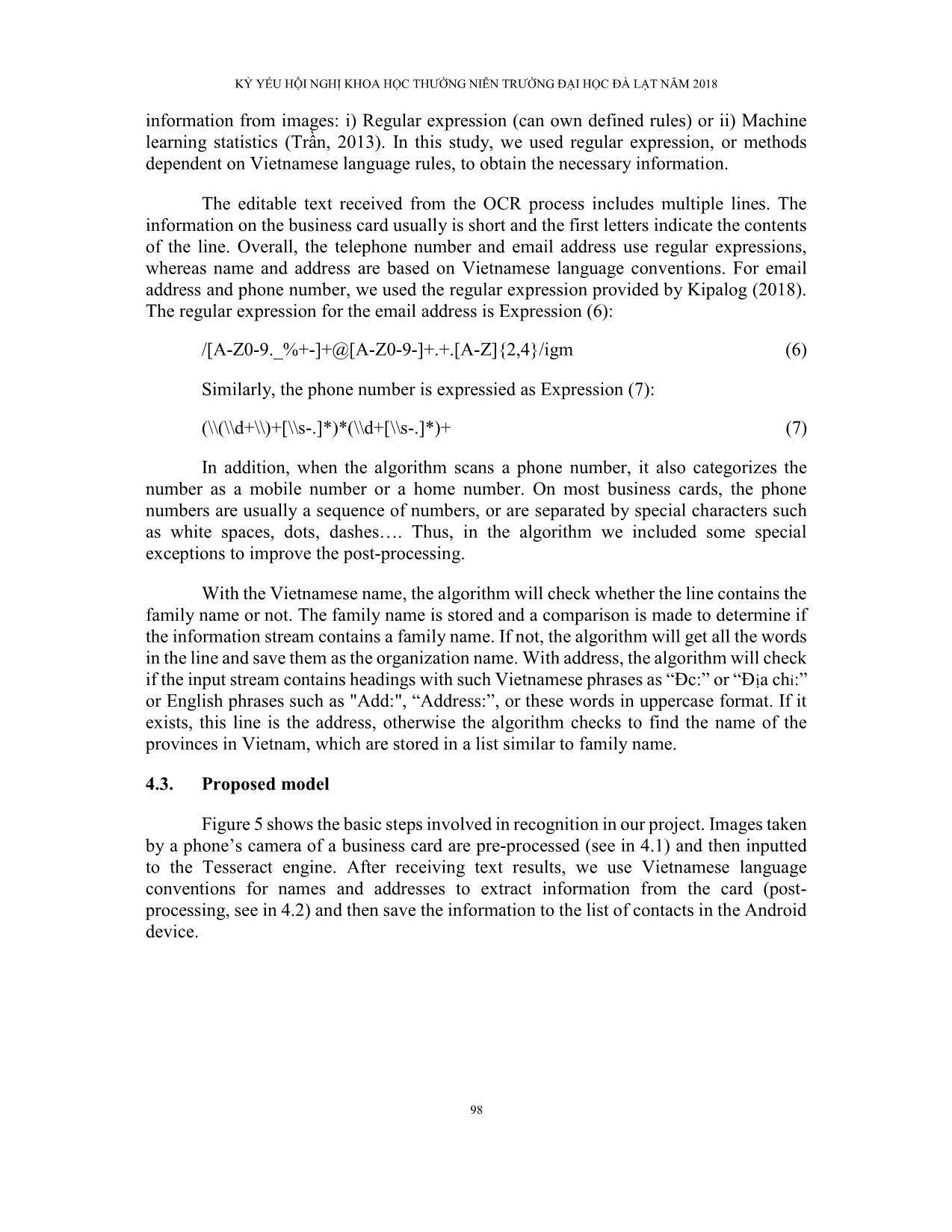

4.3. Proposed model

Figure 5 shows the basic steps involved in recognition in our project. Images taken

by a phone’s camera of a business card are pre-processed (see in 4.1) and then inputted

to the Tesseract engine. After receiving text results, we use Vietnamese language

conventions for names and addresses to extract information from the card (post-

processing, see in 4.2) and then save the information to the list of contacts in the Android

device.

KỶ YẾU HỘI NGHỊ KHOA HỌC THƯỜNG NIÊN TRƯỜNG ĐẠI HỌC ĐÀ LẠT NĂM 2018

99

Figure 5. Proposed structural model

5. RESULTS

We have successfully implemented an application called Vietnamese Card Scan

(VnCS) on the Android OS. The experiment was deployed on the Samsung Galaxy Tab

E tablet with Android 4.4.4. The size of the APK file is 26.5MB. The program runs on

the Android OS shown in Figure 6. The test data include 250 Vietnamese business cards

of three types, as presented in Table 1.

Figure 6. ScanVnCard program in Samsung Galaxy Tab E

KỶ YẾU HỘI NGHỊ KHOA HỌC THƯỜNG NIÊN TRƯỜNG ĐẠI HỌC ĐÀ LẠT NĂM 2018

100

Table 1. Business card collected data

Type Features Quantum

No. 1 Distinctive background and text, no wallpaper 135

No. 2 Distinctive background and letters, with wallpaper 75

No. 3 Have the same color, logo, picture or characters that are difficult to identify 40

Four types of information are extracted, as follows: i) Name or organization; ii)

Phone numbers; iii) Email; and iv) Address. The results with the accuracy of each

extraction type are shown in Table 2. Figure 7 presents an original Vietnamese business

card, after pre-processing, and editable text after OCR processing.

Table 2. Results for four types of information extracted from business cards

No. 1(%) No. 2(%) No. 3(%)

Name or organization 90 70 60

Phone numbers 90 80 70

Email 80 60 50

Address 70 60 60

(a)

(b)

(c)

(d)

Figure 7. An example for our OCR process in Vietnamese business card

Note: a) Original business card; b) Pre-processing; c) Editable text; and d) Saving to contact list.

KỶ YẾU HỘI NGHỊ KHOA HỌC THƯỜNG NIÊN TRƯỜNG ĐẠI HỌC ĐÀ LẠT NĂM 2018

101

6. CONCLUSIONS

This paper provides a detailed discussion about a mobile image to text recognition

system implemented through an Android application for Vietnamese business cards. The

image is taken with a camera and pre-processed by various techniques. The image is then

processed with an OCR technique to produce editable text on screen. Finally, the

necessary information is extracted by post-processing and saved to the contact list. The

results show that the proposed method achieves more efficiency and accuracy than the

original software. In the future, we will improve the program to run faster and deploy on

many operating systems.

REFERENCES

Badla, S. (2014). Improving the efficiency of Tesserct OCR engine. Retrieved from

https://scholarworks.sjsu.edu/cgi/viewcontent.cgi?referer=https://www.google.c

om/&httpsredir=1&article=1416&context=etd_projects.

Bhaskar, S., Lavassar, N., & Green, S. (2015). Implementing optical character

recognition on the Android operating system for business cards. Retrieved from

https://stacks.stanford.edu/file/druid:rz261ds9725/Bhaskar_Lavassar_Green_Bu

sinessCardRecognition.pdf.

Chang, L. Z., & Steven, Z. Z. (2009). Robust pre-processing techniques for OCR

applications on mobile devices. Paper presented at The International Conference

on Mobile Technology, Application & Systems, France.

Eason, G., Noble, B., & Sneddon, I. N. (1955). On certain integrals of Lipschitz-Hankel

type involving products of Bessel functions. Phil. Trans. Roy. Soc., A247, 529-

551.

Kipalog. (2018). 30 đoạn biểu thức chính quy mà lập trình viên Web nên biết. Được truy

lục từ ttps://kipalog.com/posts/30-doan-bieu-thuc-chinh-quy-ma-lap-trinh-vien-

web-nen-biet.

Koistinen, M., Kettunen, K., & Kervinen, J. (2017). How to improve optical character

recognition of historical Finnish newspapers using open source Tesseract OCR

engine. Paper presented at The Language & Technology Conference: Human

Language Technologies as a Challenge for Computer Science and Linguistics,

Poland.

Kulkarni, S. S., Jadhav, V., Kalpe, A., & Kurkut, V. (2014). Android card reader

application using OCR. International Journal of Advanced Research in Computer

and Communication Engineering, 3, 5238-5239.

Li, J., Jia-Bing, H. D., & Shan-shan, Z. (2010). A novel algorithm for color space

conversion model from CMYK to LAB. Journal of Multimedia, 5(2), 159-166.

Mande, S., & Hansheng, L. (2015). Improving OCR performance with background image

elimination. Paper presented at The International Conference on Fuzzy Systems

and Knowledge Discovery, China.

KỶ YẾU HỘI NGHỊ KHOA HỌC THƯỜNG NIÊN TRƯỜNG ĐẠI HỌC ĐÀ LẠT NĂM 2018

102

Matteo, B., Ratko, G., Matija, P., & Tihomir, M. (2017). Improving optical character

recognition performance for low quality images. Paper presented at The

International Symposium ELMAR, Croatia.

Pal, I., Rajani, M., Poojary, A., & Prasad, P. (2017). Implementation of image to text

conversion using Android app. International Journal of Advanced Research in

Electrical, Electronics and Instrumentation Engineering, 6, 2291-2297.

Palan, D. R., Bhatt, G. B., Mehta, K. J., Shavdia, K. J., & Kambli, M. (2014). OCR on

Android-travelmate. International Journal of Advanced Research in Computer

and Communication Engineering, 3, 5810-5812.

Phan, T. T. N., Nguyen, T. H. T., Nguyen, V. P., Thai, D. Q., & Vo, P. B. (2017).

Vietnamese text extraction from book covers. Dalat University Journal of

Science, 7(2), 142-152.

Shalin, Chopra, A., Ghadge, A. A., & Onkar, A. P. (2014). Optical character recognition.

International Journal of Advanced Research in Computer and Communication

Engineering, 3, 214-219.

Shivananda, N., & Nagabhushan, P. (2009). Separation of foreground text from complex

background in color document images. Paper presented at The Seventh

International Conference on Advances in Pattern Recognition, India.

Trần, Đ. H., (2013). Ứng dụng nhận dạng danh thiếp tiếng việt và cập nhật thông tin danh

bạ trên Android. Được truy lục từ https://text.123doc.org/document/

2558917-ung-dung-nhan-dang-danh-thiep-tieng-viet-va-cap-nhat-thong-tin-danh

-ba-tren-android-full-soure-code.htm

Yorozu, Y., Hirano, M., Oka, K., & Tagawa, Y. (1987). Electron spectroscopy studies on

magneto-optical media and plastic substrate interface. IEEE Transl. J. Magn., 2,

740-741.

Zhou, S. Z., Gilani, S. O., & Winkler, S. (2016). Open source OCR framework using

mobile devices. SPIE-IS&T, 6821, 1-6.

File đính kèm:

applying_image_pre_processing_and_post_processing_to_ocr_a_c.pdf

applying_image_pre_processing_and_post_processing_to_ocr_a_c.pdf